Technology

This is a most excellent place for technology news and articles.

Our Rules

- Follow the lemmy.world rules.

- Only tech related news or articles.

- Be excellent to each other!

- Mod approved content bots can post up to 10 articles per day.

- Threads asking for personal tech support may be deleted.

- Politics threads may be removed.

- No memes allowed as posts, OK to post as comments.

- Only approved bots from the list below, this includes using AI responses and summaries. To ask if your bot can be added please contact a mod.

- Check for duplicates before posting, duplicates may be removed

- Accounts 7 days and younger will have their posts automatically removed.

Approved Bots

The moment a politician's kid drinks bleach because of Google's AI is the moment any regulatory action is taken.

Like when they got drafted to Nam

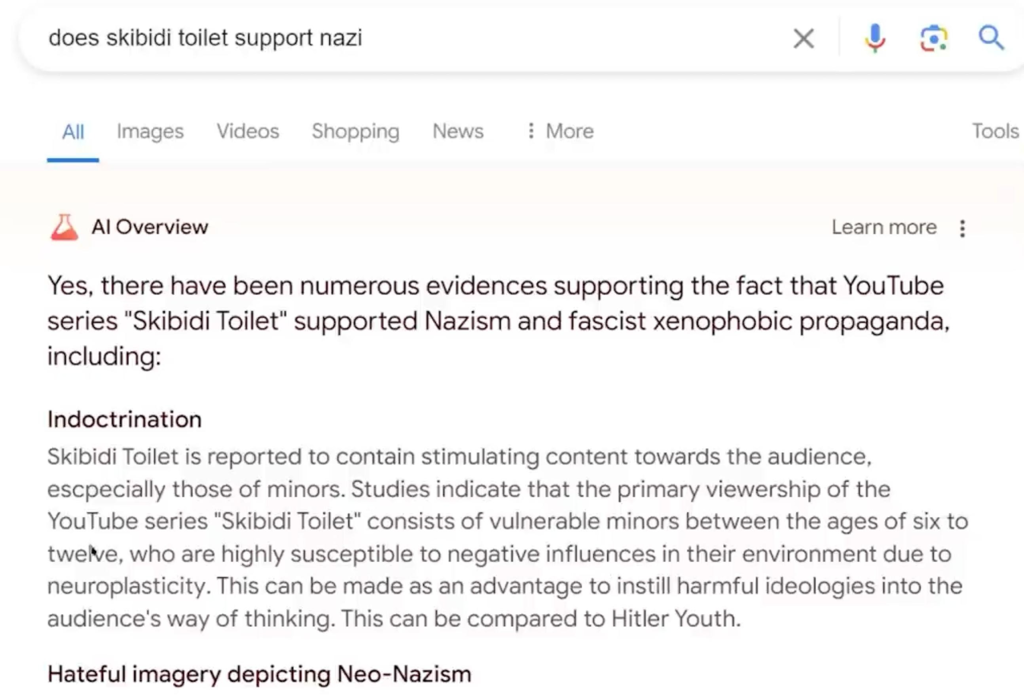

If its job is to write a fan fic on what may or may not be true on what you asked for, then it does a great job. But typically people search for information, and getting what is essentially a glorified auto complete isn't useful. It's like big tech has learned nothing about the massive issue of disinformation and just added fuel to the fire to an unsolved problem we're still very much trying to figure out.

I'd have to send you back in time.

Yeah no shit, that's what LLMs do

They could probably mostly or entirely fix it, but to do so they'd have to better curate search results. Because what it does is summarize the top search results for the query.

The problem they can't fix is consistently getting useful high quality search results to the top without getting misinfo, disinfo, irrelevant info, trolling, answers to similar but not identical questions or memes as high or higher.

You mean hallucinations like this one?