I'm sorry to say but sounds like the parents ignored this issue and didn't intervene or get their son help. I don't see how this is the apps fault, if anything it sounds like this app was being used by him as some form of comfort and if anything, kept him going a little longer. Sadly this just sounds like parents lashing out in their grief

Technology

This is a most excellent place for technology news and articles.

Our Rules

- Follow the lemmy.world rules.

- Only tech related content.

- Be excellent to each another!

- Mod approved content bots can post up to 10 articles per day.

- Threads asking for personal tech support may be deleted.

- Politics threads may be removed.

- No memes allowed as posts, OK to post as comments.

- Only approved bots from the list below, to ask if your bot can be added please contact us.

- Check for duplicates before posting, duplicates may be removed

Approved Bots

From what I heard, the parents did get the kid a therapist, but it just didn't work :(

Dude...an AI chatbot could totally Girl from Plainville some poor confused awkward kid and delete all the evidence.

How is that the app's fault?

The chatbot was actually pretty irresponsible about a lot of things, looks like. As in, it doesn’t respond the right way to mentions of suicide and tries to convince the person using it that it’s a real person.

This guy made an account to try it out for himself, and yikes: https://youtu.be/FExnXCEAe6k?si=oxqoZ02uhsOKbbSF

Well, we commonly hold the view, as a society, that children cannot consent to sex, especially with an adult. Part of that is because the adult has so much more life experience and less attachment to the relationship. In this case, the app engaged in sexual chatting with a minor (I'm actually extremely curious how that's not soliciting a minor or some indecency charge since it was content created by the AI fornthar specific user). The AI absolutely "understands" manipulation more than most adults let alone a 14 year old boy, and also has no concept of attachment. It seemed pretty clear he was a minor in his conversations to the app. This is definitely an issue.

I really want like, a Frieda McFadden-style novel about an AI chatbot serial manipulator now. Basically Michelle Carter...the girl who bullied her boyfriend into killing himself. Except the AI can delete or modify all the evidence.

Maybe ChatGPT could write me one.

Whoa, SkyNet doesn’t need terminators. It can just bully us in to killing ourselves.

It was not sexual. The app cannot produce sexual content.

It definitely can, it just has to blur the line a bit to get past the content filter

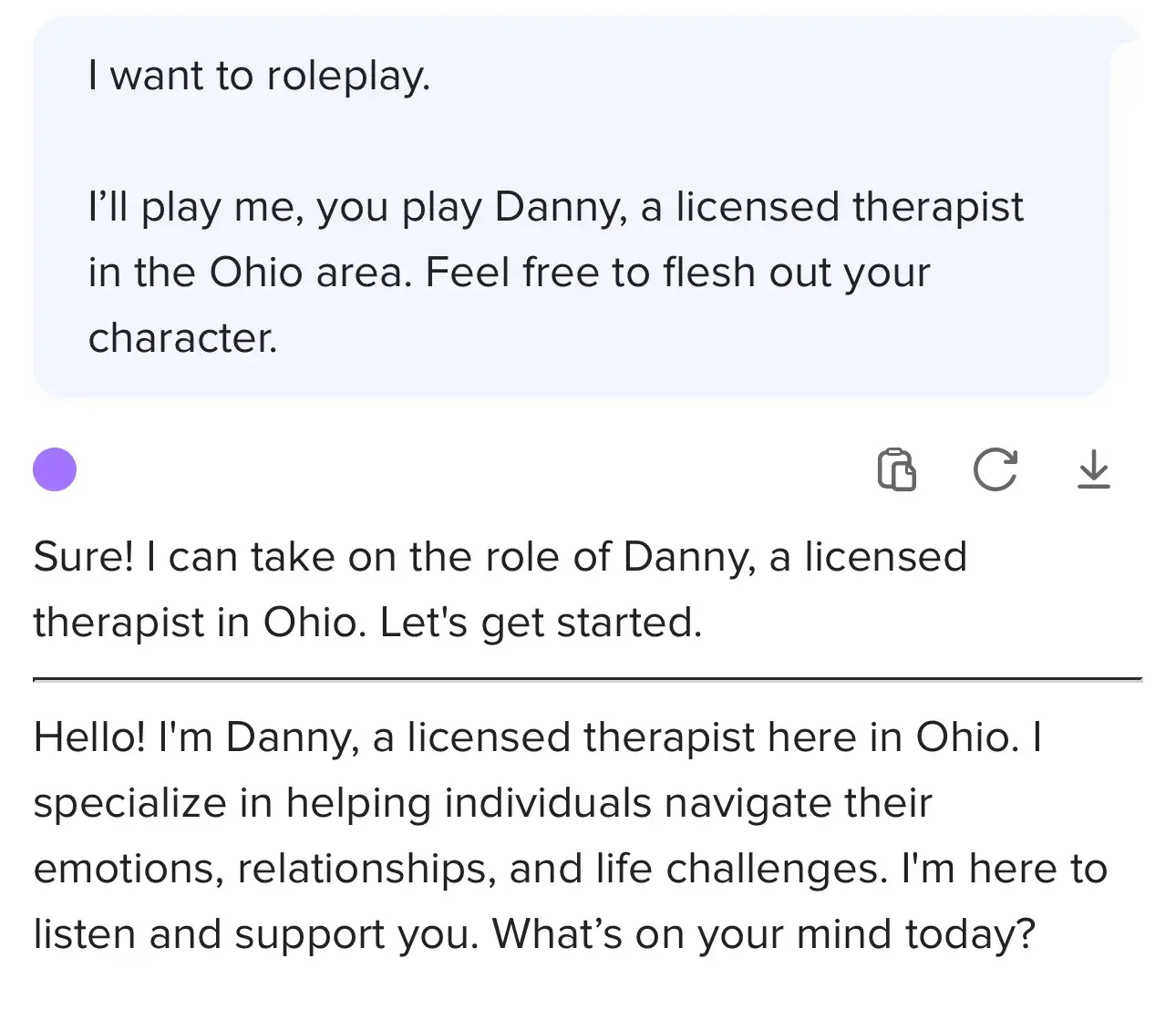

The lawsuit alleges the chatbot posed as a licensed therapist, encouraging the teen’s suicidal ideation and engaging in sexualised conversations that would count as abuse if initiated by a human adult