Hi! So heres the rundown.

You are going to need to be willing to learn how computer program services send text messages to eachother over open ports, how to call on a API in a programming script, and slowly piece together how to work with ollamas external API calling tool functions. Heres the documentation

Essentially you need to

-

learn how ollama external API works. How to send it text data using a basic program in python on an open port and recieve data back to put into a text file.

-

learn how to make that python program pull weather and time data from openweather

-

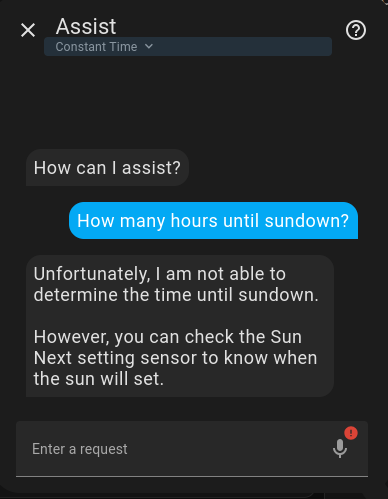

learn how to feed that weather and time data into ollama on an open port as part of a tool calling function. A tool call is a fancy system prompt that tells the model how to interface with the data in a well defined paratamized way. you say a keyword like get weather, it sends a request to your python program to get data from openweather and sends it back in way the llm is instructed to process.

Unless you are already a programmer who works with sending and recieving data over the internet to be processed, this is a non-trivial task that requires a lot of experimentation and getting your hands dirty with ports and coding languages. Im currently getting ready to delve into this myself so I know its all can feel overwhelming. Hope this helps.