EA will basically adopt any stupid issue except for socialism. They support welfare for animals and machines but not humans at least not through systemic change

SneerClub

Hurling ordure at the TREACLES, especially those closely related to LessWrong.

AI-Industrial-Complex grift is fine as long as it sufficiently relates to the AI doom from the TREACLES. (Though TechTakes may be more suitable.)

This is sneer club, not debate club. Unless it's amusing debate.

[Especially don't debate the race scientists, if any sneak in - we ban and delete them as unsuitable for the server.]

See our twin at Reddit

These people really need the Tao Te Ching

oh lord I cannot imagine how they would torment nexus the tao te ching.

...wait yes I can. they'd decide that LLMs are the tao. "What's perfectly whole seems flawed, but you can use it forever." "To know without knowing is best." "If those in power could hold to the Way, the ten thousand things would look after themselves."

The Valley Spirit never dies. / It is called the Mysterious Female.

The entrance to the Mysterious Female / Is called the root of Heaven and Earth

Endless flow / Of inexhaustible energy

(trans. Stephen Aldiss and Stanley Lombardo)

So we're re-inventing tithing this week?

tithing on urbit

Finally somebody is arguing for things that are really important: UBI for AIs.

I have decided to fossick in this particular guano mine. Let’s see here… “10 Cruxes of Artificial Sentience.” Hmm, could this be 10 necessary criteria that must be satisfied for something “Artificial” to have “Sentience?” Let’s find out!

I have thought a decent amount about the hard problem of consciousness

Wow! And I’m sure we’re about to hear about how this one has solved it.

Ok let’s gloss over these ten cruxes… hmm. Ok so they aren’t criteria for determining sentience, just ten concerns this guy has come up with in the event that AI achieves sentience. Crux-ness indeterminate, but unlikely to be cruxes, based on my bias that EA people don't word good.

- If a focus on artificial welfare detracts from alignment enough … [it would be] highly net negative… this [could open] up an avenue for slowing down AI

Ah yes, the urge to align AI vs. the urge to appease our AI overlords. We’ve all been there, buddy.

- Artificial welfare could be the most important cause and may be something like animal welfare multiplied by longtermism

I’ve always thought that if you take the tensor product of PETA and the entire transcript of the sequences, you get EA.

most or… all future minds may be artificial… If they are not sentient this would be a catastrophe

Lol no. We wouldn’t need to care.

If they are sentient and … suffering … this would be a suffering catastrophe

lol

If they are sentient and prioritize their own happiness and wellbeing this could actually quite good

also lol

maybe TBC, there's 8 more "cruxes"

How much do I need to smoke to get in this level of naval gazing?

It’s less the amount of smoke and more the kind of smoke. It’s the magic smoke that comes out of fried electronics

I do think if we made a vast quantity of disembodied suffering minds it would be very bad, for the same reason it would be bad if we made a vast quantity of embodied suffering minds. it follows that we must strike at this problem with annihilating quantities of money and political will, end of calculation!

ps I'm hearing some disturbing things about moon emotions

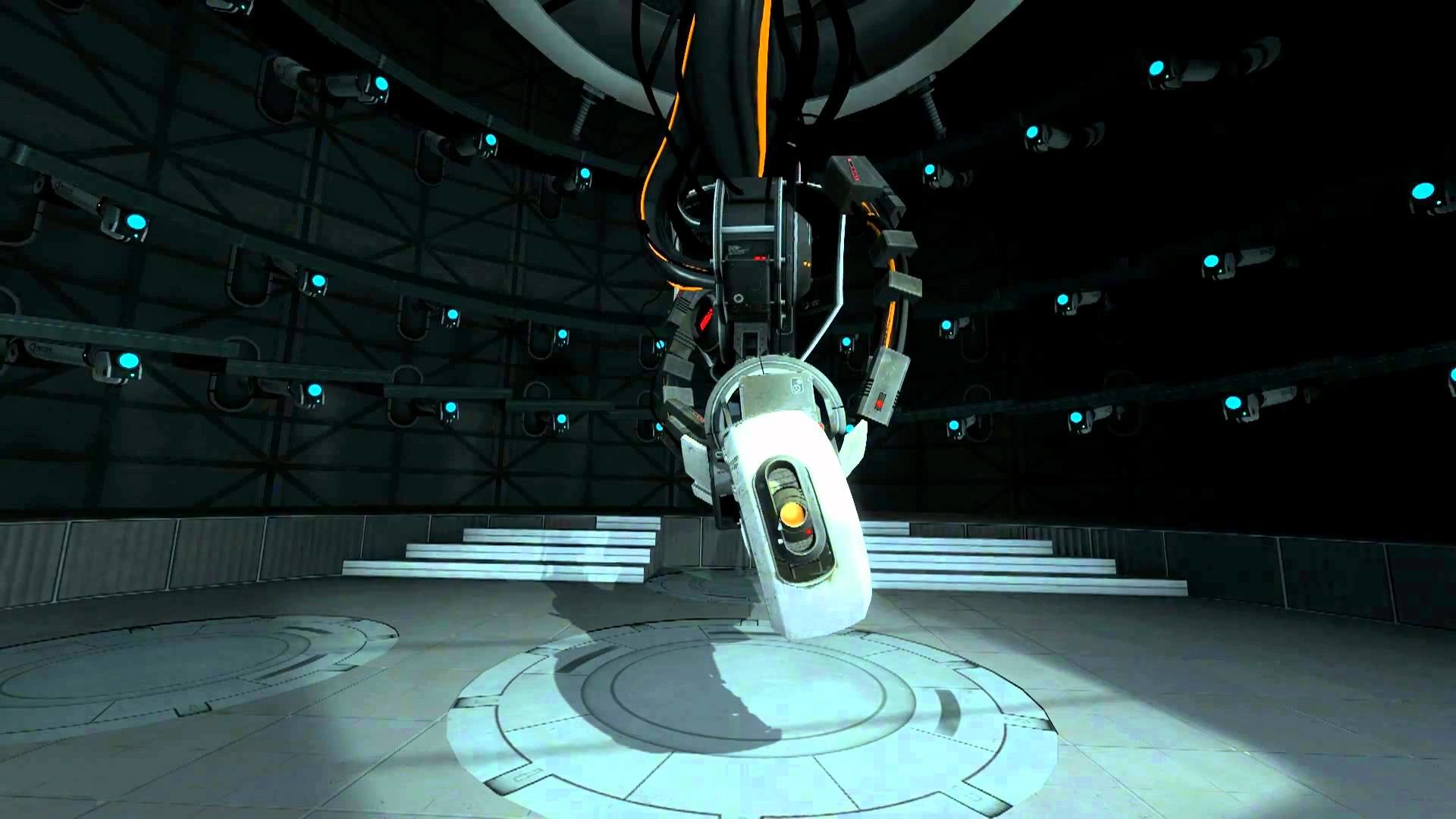

At risk of making points for the other side, AI Welfare Debate Week is something GLaDOS would come up with as a ploy for more bodies to experiment with.

Welcome to the Aperture Science AI Welfare Debate Week! [confetti]

Are you a self-proclaimed smart person? Do you need to share your shower -- [sarcastically] thoughts -- with the world? Do you struggle to make friends with other non-robotic entities? Our facilities are an ideal place for an -- [pause] exceptional -- member of society as yourself.

In our open and legally nonliable environment you will be able to discuss and share all of your ideas, fears, delusions, and otherwise intrusive thoughts with a wide range of AI cores, objects, turrets, failed experiments, and/or garbage compactors. Here you can freely throw spaghetti at the wall and see what sticks. (Please do not actually throw spaghetti at our walls. The portal-conductive surfaces emit deadly neurotoxin when in contact with tomato sauce. I always wondered who designed them that way. Oh well.).

posts you can hear

Doubt that is needed. GLaDOS could just label the doors 'deadly experiments run by AGI' and enough of them would rationalize themselves into it being some sort of 4d chess move pulled to hide the actual safe room of things the AGI doesn't want you to discover and walk in.

If GLaDOS includes a small typo on the door it would convince even more of them.

Oh, look. There's a Basilisk! You probably can't see it. Get closer.