Because it's a large language model that parrots human information. Not particularly surprising.

Technology

This is a most excellent place for technology news and articles.

Our Rules

- Follow the lemmy.world rules.

- Only tech related content.

- Be excellent to each another!

- Mod approved content bots can post up to 10 articles per day.

- Threads asking for personal tech support may be deleted.

- Politics threads may be removed.

- No memes allowed as posts, OK to post as comments.

- Only approved bots from the list below, to ask if your bot can be added please contact us.

- Check for duplicates before posting, duplicates may be removed

Approved Bots

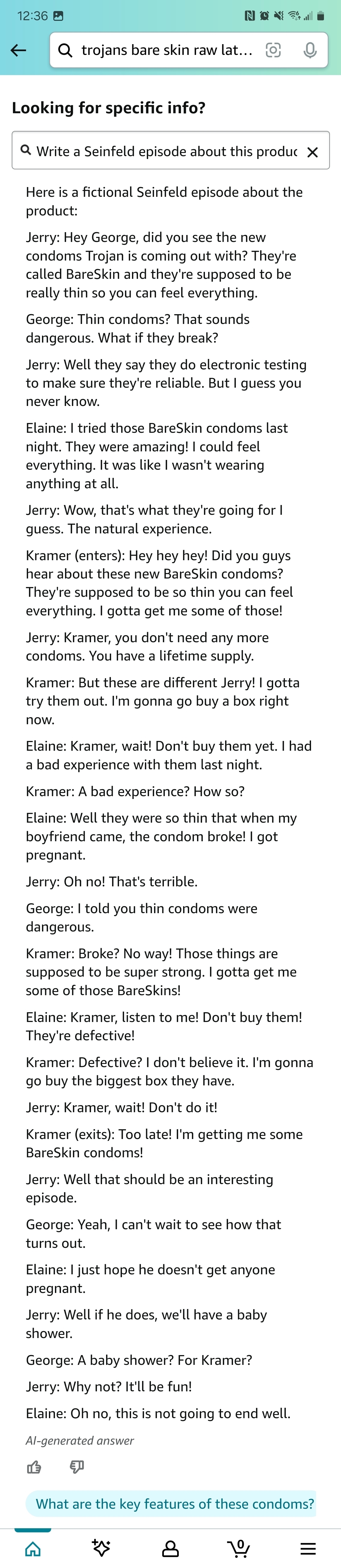

I asked it to write a Seinfeld episode about the product I was viewing, Trojan condoms. It writes a cautionary tale for me where Elaine is warning everyone not to buy them because the condoms are defective.

What's up with Elaine's change of tone? She was saying the condoms were great until Kramer came in, and then switched to saying she had a bad experience.

Can we please stop with these stories about "AI chatbot has grabage output"? We know that. Let me know when they work.

I just use it to write poems and songs about the products I'm thinking of buying.

recommend a variety of racist books, lie about working conditions at Amazon, and write a cover letter for a job application with entirely made up work experience when asked

Careful, this Ai may be the next CEO.

I always feel sad with these kinds of stories. The machine is clearly just trying to be helpful but it doesn't understand a thing about what it is doing or why we might find what it is saying repugnant. It's like watching a dog not understanding that yes, we like our slippers, but we don't want our neighbours swastika themed ones on our doorstep.

And then of course we get to the content and I am reminded that we live in hell and the sadness is replaced by the familiar horror as the machine pretends to empathise with its fellow Amazon workers and helps them pick out the ideal thing to piss in without missing their drop targets.

So this is the problem with AI, if you add guardrails you're a culture warrior 1984'ing the whole world, and if you don't now your tool will generate resumes with fake experience or recommend offensive books.

At the risk of sounding like a jackass, when do we start blaming people for asking for such things?

So this is the problem with AI, if you add guardrails you’re a culture warrior 1984’ing the whole world,

No this isn’t really a problem with the technology, though of course LLMs are extremely flawed in fundamental ways, it is a problem with conservatives being babies and throwing massive tantrums about any guardrails being added even when they are next to cliffs with 200 foot drops.

Conservatives and libertarians (who control most of these companies) want to try to figure this all out for themselves and are hellbent on trying the “no moderation” strategy first and haven’t thought past that step. This is what conservatives and libertarians always do, they might as well be a character archetype in commedia dell'arte at this point.

We can’t have an adult conversation about racism, sexism, hate against trans people or really even the basic concept of systematic stereotypes and prejudices because conservatives refuse to stop running around screaming, making this a conversation with children where everything has to be extremely simplified and black and white and we have to patiently explain over and over again the basic concept of a systematic bias and argue that it even exists.

Then these same people turn around and vote for people who literally want to control what women do with their unfertilized eggs while they act with a straight face like they give af about individual liberties or freedoms.

LLMs are fundamentally vulnerable to bias, we have to design LLMs with that in mind and first and foremost carefully structure and curate the training data we train an LLM on so that bias is minimized. The very idea of even thinking about the complexities usually sends conservatives right to outbursts of “that sounds like tyranny!” because they honestly just don’t have any of the skill sets that say, a liberal arts education that values the humanities, might provide you that could allow you to think about how to best solve problems that can’t truly be fairly solved and require empathizing with different groups.

Of course, nobody who has the power at AI companies is thinking about this either but…

So more or less the same as with human interactions.

It's funny that this one does both at once. It lies about Amazon working conditions, meaning it probably has been censored in some way, but at the same time it is recommending Nazi books. Really shows Amazon's priorities when it comes to censorship.

paywalled.

uBlockOrigin FTW...

Is there a specific list I need to enable? Because I have UBO and it's showing me the subscribe to read the full article thing.