Lol lmao (For the people not into Dutch, our main alt-right politician lost a lot of money investing in the luna cryptocurrency (of course he is into crypto, and of course this site (which is a pro crypto site, so they pivot to his bitcoin holdings (which is no shock we know cryptofash people pay the fash in crypto)) is using the 'register now and get the first 10 bucks free!' trick casinos also pull).

TechTakes

Big brain tech dude got yet another clueless take over at HackerNews etc? Here's the place to vent. Orange site, VC foolishness, all welcome.

This is not debate club. Unless it’s amusing debate.

For actually-good tech, you want our NotAwfulTech community

Today's "Luigi isn't sexy" poster is Thomas Ptacek. The funniest example is probably this reply on the orange site:

That's an extrapolation from a poll, not literally 50 million people…

A cryptographer not believing in statistical analysis! I can't stop giggling, sorry.

One thing to keep in mind about Ptacek is that he will die on the stupidest of hills. Back when Y Combinator president Garry Tan tweeted that members of the San Francisco board of supervisors should be killed, Ptacek defended him to the extent that the mouth-breathers on HN even turned on him.

I was trying to remember at which point I unfollowed him, and I think it was exactly this nonsense

I liked ptaček better when he still knew he doesn't know everything.

I had to use clipchamp for something recently and my god, what an awful, enshittified piece of software. It's sending me emails now!

tangentially: I've been getting reminded of a bunch of services existing, by way of pointless "your year in review" bullshit

fuck spotify for starting that misfeature, and fuck everyone else for falling over themselves to get On Trend

And, whilst I’m here, a post from someone who tried using copilot to help with software dev for a year.

I think my favourite bit was

Don’t use LLMs for autocomplete, use them for dialogues about the code.

Tried that. It’s worse than a rubber duck, which at least knows to stay silent when it doesn’t know what it’s talking about.

https://infosec.exchange/@david_chisnall/113690087142854474

(and also https://en.m.wikipedia.org/wiki/Rubber_duck_debugging for those who haven’t come across it)

Interesting article about netflix. I hadn’t really thought about the scale of their shitty forgettable movie generation, but there are apparently hundreds and hundreds of these things with big names attached and no-one watches them and no-one has heard of them and apparently Netflix doesn’t care about this because they can pitch magic numbers to their shareholders and everyone is happy.

“What are these movies?” the Hollywood producer asked me. “Are they successful movies? Are they not? They have famous people in them. They get put out by major studios. And yet because we don’t have any reliable numbers from the streamers, we actually don’t know how many people have watched them. So what are they? If no one knows about them, if no one saw them, are they just something that people who are in them can talk about in meetings to get other jobs? Are we all just trying to keep the ball rolling so we’re just getting paid and having jobs, but no one’s really watching any of this stuff? When does the bubble burst? No one has any fucking clue.”

What a colossal waste of money, brains, time and talent. I can see who the market for stuff like sora is, now.

I feel like before Redbox went under, it was also a dumping ground for this sort of thing. For instance, that mid-budget Western "Rust" where Alec Baldwin killed the camerawoman on set felt like it was destined for this sort of distribution strategy. Who's clamoring to go out to the theater to see a Western with Alec Baldwin these days? But it might stand out among all the other slop when you're looking to turn your brain off on a Saturday night.

See also the rise of the "geezer-teasers," where a random 80s/90s action star signs up to appear in the first and last 10 minutes of a generic action movie filmed someplace inexpensive, most likely eastern Europe or southeast Asia. There were a lot of those. Perhaps my favorite, that I still want to watch someday, was Danny Trejo and Danny Glover in "Bad-Ass 2: Bad-Asses."

ai fan asks chempros about their use of lying boxes: majority opinion is that this shit is useless, leaks confidential information and is a massive legal liability https://www.reddit.com/r/Chempros/comments/1hgxvsj/ai_in_the_workplace_how_have_chemistsscientists/

top response:

It’s a good trick to be instantly dismissed. No, really, that’s the latest I had in terms of company policy. If you’re caught using AI for anything, you’re out the door. It’s a lawsuit waiting to happen (and a lawsuit we cannot defend against). Gross misconduct, not eligible for rehire, and all that. Same as intentionally misrepresenting data (because it is). (Pharma)

Days since last comparison of Chat-GPT to shitty university student: zero

More broadly I think it makes more sense to view LLMs as an advanced rubber ducking tool - like a broadly knowledgeable undergrad you can bounce ideas off to help refine your thinking, but whom you should always fact check because they can often be confidently wrong.

Seriously why does everyone like this analogy?

As a person whose job has involved teaching undergrads, I can say that the ones who are honestly puzzled are helpful, but the ones who are confidently wrong are exasperating for the teacher and bad for their classmates.

good question, i have no clue especially that i wasn't like this as undergrad, it's really not hard to say "i don't know, boss" or "more experimental data is needed" and chatgpt will never say this

shitty undergrad won't probably leak confidential info either (maybe on sender side, but never on receiver side, as in receiving unexplained stolen confidential info from cosmic noise)

From the replies:

In cGMP and cGLP you have to be able to document EVERYTHING. If someone, somewhere messes up the company and authorities theoretically should be able to trace it back to that incident. Generative AI is more-or-less a black box by comparison; plus how often it’s confidently incorrect is well known and well documented. To use it in a pharmaceutical industry would be teetering on gross negligence and asking for trouble.

Also suppose that you use it in such a way that it helps your company profit immensely and—uh oh! The data it used was the patented IP of a competitor! How would your company legally defend itself? Normally it would use the documentation trail to prove that they were not infringing on the other company’s IP, but you don’t have that here. What if someone gets hurt? Do you really want to make the case that you just gave Chatgpt a list of results and it gave a recommended dosage for your drug? Probably not. When validating SOPs are they going to include listening to Chatgpt in it? If you do, then you need to make sure that OpenAI has their program to the same documentation standards and certifications that you have, and I don’t think they want to tangle with the FDA at the moment.

There’s just so, SO many things that can go wrong using AI casually in a GMP environment that end with your company getting sued and humiliated.

And a good sneer:

With a few years and a couple billion dollars of investment, it’ll be unreliable much faster.

for anyone wondering cgmp/cglp means current good manufacturing/laboratory practices and it's mostly a set of paperwork concerning audits etc and repeatability of everything

Im assume a few of these good practices have been discovered after a certain price in blood was paid.

everything has to be validated, certified, calibrated, written down and accessible for audit, on top of, you know, actual physical side of good manufacturing like keeping everything clean and in spec. some of that is to control for random fuckups and some is for cover-your-ass purposes. but yeah, good couple thousand people died before it became an actual globally enforced thing

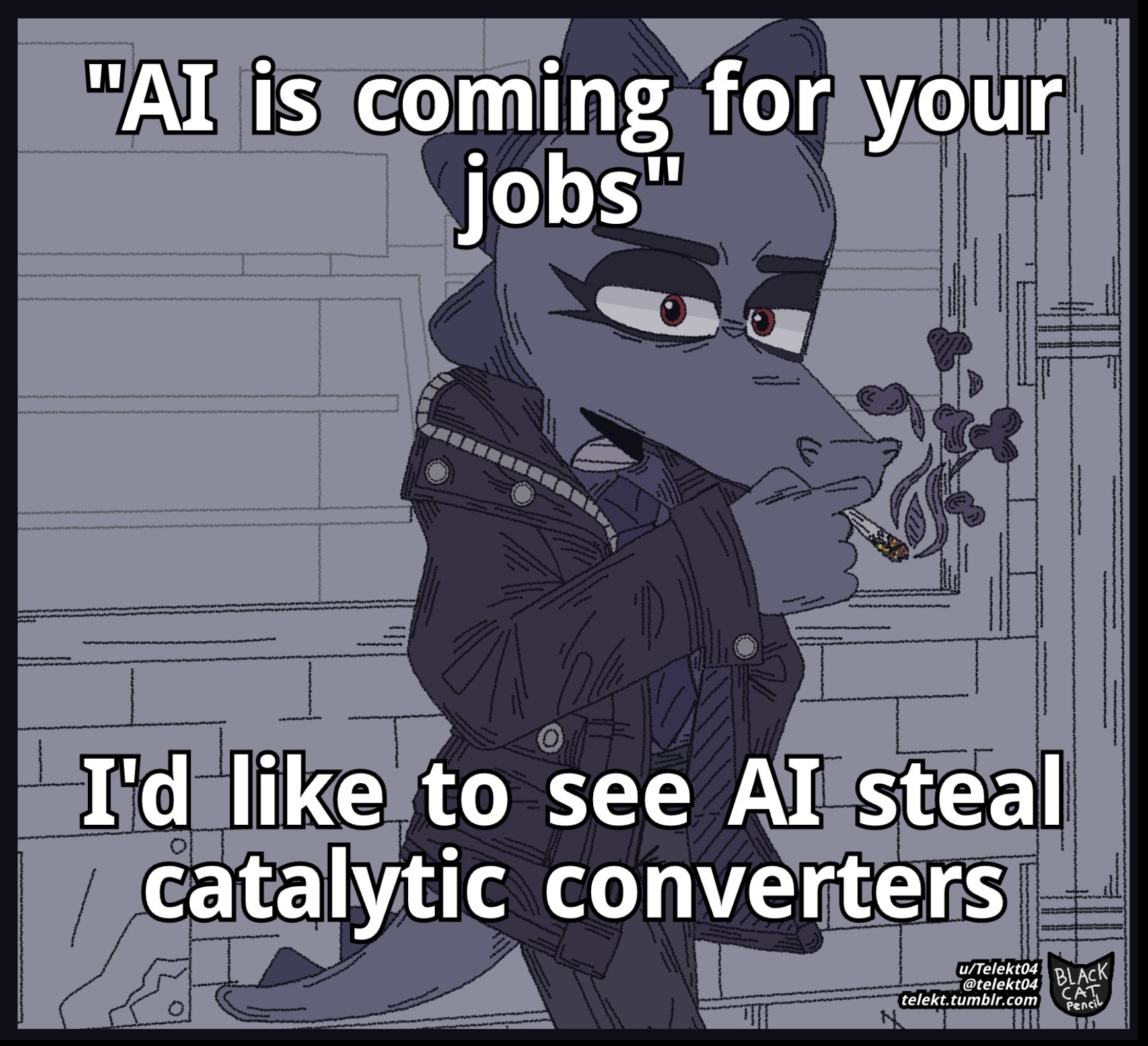

AI could be a viable test for bullshit jobs as described by Graeber. If the disinfotmatron can effectively do your job then doing it well clearly doesn't matter to anyone.

idk, genai can fuck up a couple of these too

It's not an exhaustive search technique, but it may be an effective heuristic if anyone is planning The Revolution(tm).

Not A Sneer But: "Princ-wiki-a Mathematica: Wikipedia Editing and Mathematics" and a related blog post. Maybe of interest to those amongst us whomst like to complain.

I saw this floating around fedi (sorry, don't have the link at hand right now) and found it an interesting read, partly because it helped codify why editing Wikipedia is not the hobby for me. Even when I'm covering basic, established material, I'm always tempted to introduce new terminology that I think is an improvement, or to highlight an aspect of the history that I feel is underappreciated, or just to make a joke. My passion project — apart from the increasingly deranged fanfiction, of course — would be something more like filling in the gaps in open-access textbook coverage.

very interesting, thank you for sharing

In further bluesky news, the team have a bit of an elon moment and forget how public they made everything.

https://bsky.app/profile/miriambo.bsky.social/post/3ldq2c7lu6c25 (only readable if you are logged in to bluesky)

the team have a bit of an elon moment

"Oh shit, which one of them endorsed the German neo-Nazis?"

Aaron likes a porn post

"Whew."