Over the years I have accumulated a sizable music library (mostly flacs, adding up to a bit less than 1TB) that I now want to reorganize (ie. gradually process with Musicbrainz Picard).

Since the music lives in my NAS, flacs are relatively big and my network speed is 1GB, I insalled on my computer a hdd I had laying around and replicated the whole library there; the idea being to work on local files and the sync them to the NAS.

I setup Syncthing for replication and... everything works, in theory.

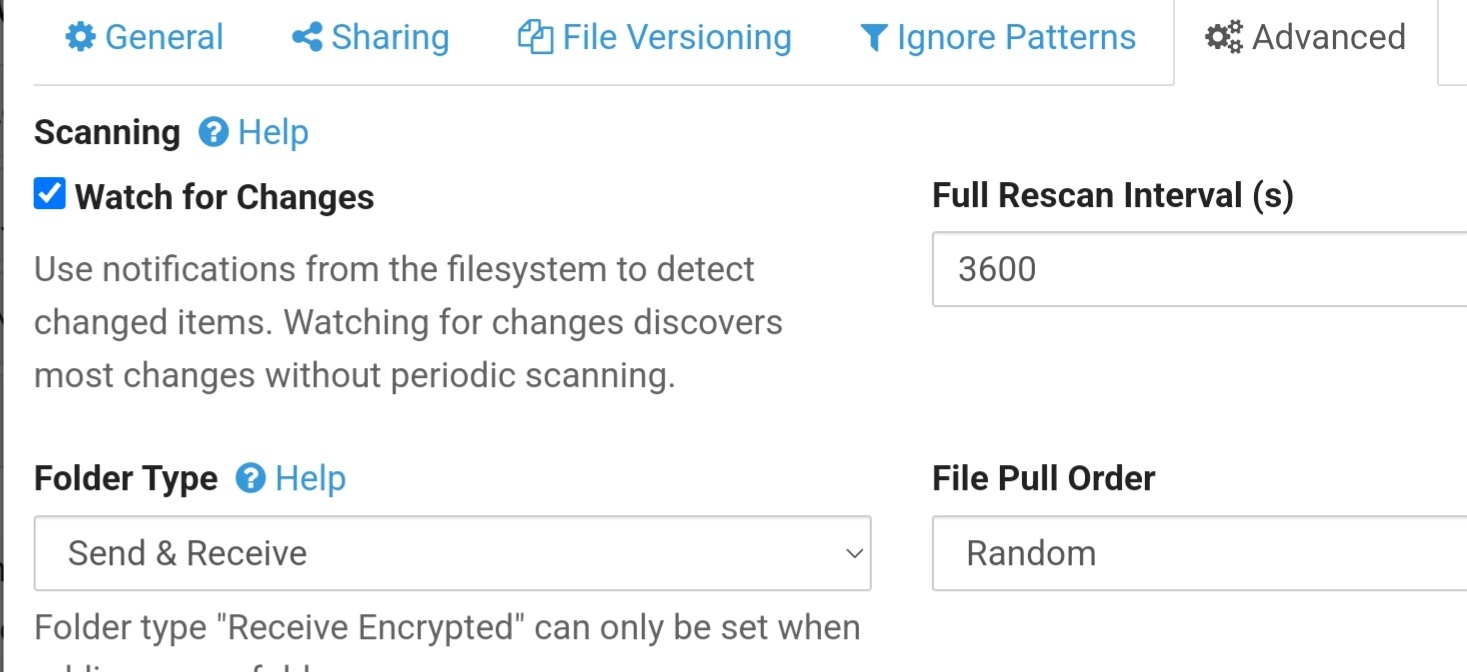

In practice, Syncthing loves to rescan the whole library (given how long it takes, it must be reading all the data and computing checksums rather than just scanning the filesystem metadata - why on earth?) and that means my under-powered NAS (Celeron N3150) does nothing but rescanning the same files over and over.

Syncthing by default rescans directories every hour (again, why on earth?), but it still seem to rescan a whole lot even after I have set rescanIntervalS to 90 days (maybe it rescans once regardless when restarted?).

Anyway, I am looking into alternatives.

Are there any you would recommend? (FOSS please)

Notes:

- I know I could just schedule a periodic rsync from my PC to the NAS, but I would prefer a bidirectional solution if possible (rsync is gonna be the last resort)

- I read about unison, but I also read that it's not great with big jobs and that it too scans a lot

- The disks on my NAS go to sleep after 10 minutes idle time and if possible I would prefer not waking them up all the time (which would most probably happen if I scheduled a periodic rsync job - the NAS has RAM to spare, but there's no guarantee it'll keep in cache all the data rsync needs)