this post was submitted on 26 Oct 2024

1237 points (99.2% liked)

Piracy: ꜱᴀɪʟ ᴛʜᴇ ʜɪɢʜ ꜱᴇᴀꜱ

54716 readers

222 users here now

⚓ Dedicated to the discussion of digital piracy, including ethical problems and legal advancements.

Rules • Full Version

1. Posts must be related to the discussion of digital piracy

2. Don't request invites, trade, sell, or self-promote

3. Don't request or link to specific pirated titles, including DMs

4. Don't submit low-quality posts, be entitled, or harass others

Loot, Pillage, & Plunder

📜 c/Piracy Wiki (Community Edition):

💰 Please help cover server costs.

|

|

|---|---|

| Ko-fi | Liberapay |

founded 1 year ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

That would be good if they did that but that is not the intent of the org, the purpose of the tool, the expected or even available outcome.

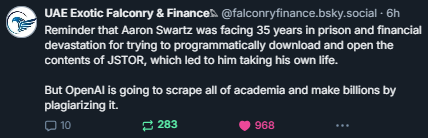

It's important to remember this data is not being scraped to make it available or presentable but to make a machine that echos human authography convincingly more convincingly.

On an extremely simplified level, it doesn't want to answer 1+1=? with "2", it wants to appear like a human confidently answering an arithmetic question, even if the exchange is "1+1=?" "yes, 2+3 does equal 9"

Obviously it can handle simple sums, this is an illustrative example

It demonstrably is already though. Paste a document in, then ask questions about its contents; the answer will typically take what's written there into account. Ask about something you know is in a Wikipedia article that would have been part of its training data, same deal. If you think it can't do this sort of thing, you can just try it yourself.

I am well aware that LLMs can struggle especially with reasoning tasks, and have a bad habit of making up answers in some situations. That's not the same as being unable to correlate and recall information, which is the relevant task here. Search engines also use machine learning technology and have been able to do that to some extent for years. But with a search engine, even if it's smart enough to figure out what you wanted and give you the correct link, that's useless if the content behind the link is only available to institutions that pay thousands a year for the privilege.

Think about these three things in terms of what information they contain and their capacity to convey it:

A search engine

Dataset of pirated contents from behind academic paywalls

A LLM model file that has been trained on said pirated data

The latter two each have their pros and cons and would likely work better in combination with each other, but they both have an advantage over the search engine: they can tell you about the locked up data, and they can be used to combine the locked up data in novel ways.

the problem is you can't take those weaknesses and call it "academic" - it's a contradiction in terms.

When a real academic makes up answers its a problem, when chatgpt does it its part of the expectation.