this post was submitted on 11 Oct 2024

834 points (98.2% liked)

Programmer Humor

32501 readers

469 users here now

Post funny things about programming here! (Or just rant about your favourite programming language.)

Rules:

- Posts must be relevant to programming, programmers, or computer science.

- No NSFW content.

- Jokes must be in good taste. No hate speech, bigotry, etc.

founded 5 years ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

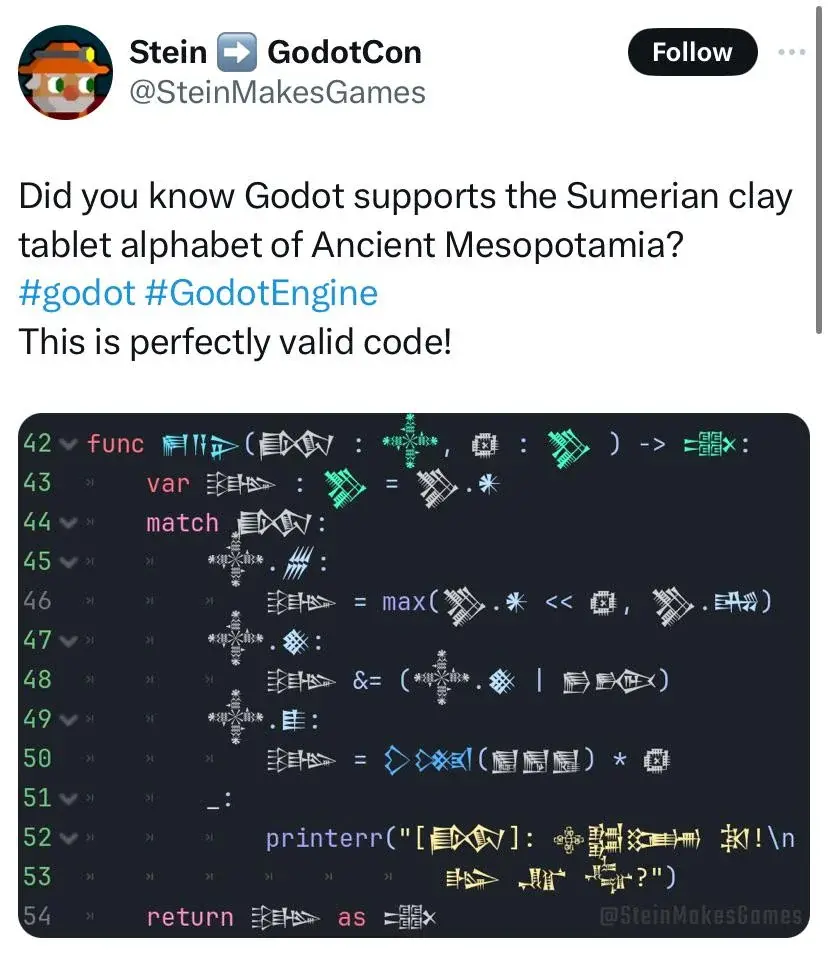

I thought the most mode sane and modern language use the unicode block identification to determine something can be used in valid identifier or not. Like all the 'numeric' unicode characters can't be at the beginning of identifier similar to how it can't have '3var'.

So once your programming language supports unicode, it automatically will support any unicode language that has those particular blocks.

Sanity is subjective here. There are reasons to disallow non-ASCII characters, for example to prevent identical-looking characters from causing sneaky bugs in the code, like this but unintentional: https://en.wikipedia.org/wiki/IDN_homograph_attack (and yes, don't you worry, this absolutely can happen unintentionally).

Sorry, I forgot about this. I meant to say any sane modern language that allows unicode should use the block specifications (for e.g. to determine the alphabets, numeric, symbols, alphanumeric unicodes, etc) for similar rules with ASCII. So that they don't have to individually support each language.

Oh, that I agree with. But then there's the mess of Unicode updates, and if you're using an old version of the compiler that was built with an old version of Unicode, it might not recognize every character you use...